Scientific study & Exploration: Discover the Globe Via Research Study Research and Modern Technology

- EMO learned precise lip movements by watching its reflection in a mirror, mapping 26 facial motors to visual feedback.

- Researchers used a vision-to-action (VLA) AI that converts visual input directly into coordinated facial motor actions.

- EMO trained further on hours of YouTube speech videos, aligning visual mouth shapes with spoken sounds across 10 languages.

- Human evaluators preferred the VLA method (about 62%) over amplitude and nearest-neighbor baselines for realistic lip-syncing.

Can you specify the specific speaking with you is 100 % never ever a robotic? Quickly, you can not be so certain.

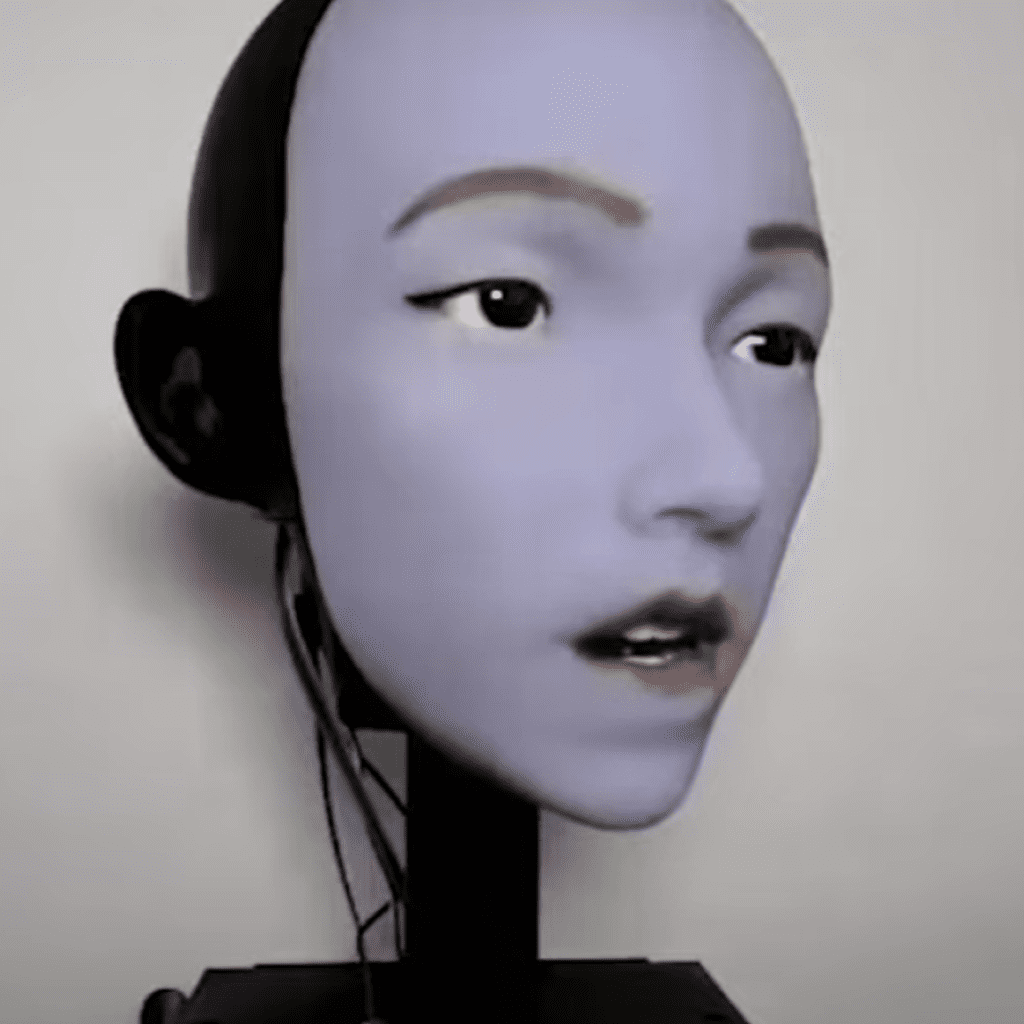

For the extremely very first time, scientists have actually developed a robotic that can move its mouth especially like a human. This suggests it stays clear of the supposed” amazing valley result, where a spider’s activities turn up distressing considering that they are annoyingly near to all-natural– yet do not fairly please that restriction.

The Columbia College scientists achieved the task by allowing their robot, EMO, to examine itself in a mirror. It found out precisely just how its versatile face and silicone lips would definitely relocate response to the specific tasks of its 26 face electrical motors, each with the ability of moving approximately 10 degrees of freedom.

They described their techniques in a research study launched Jan. 14 in the journal Scientific study Robotics

Simply exactly how EMO discovered to relocate its face like a human

EMO makes use of a professional system (AI) system called a “vision-to-action” language variation (VLA), indicating it can find out precisely just how to convert what it sees right into teamed up physical activities without pre-defined guidelines. Throughout training, the humanoid robot made many obviously approximate expressions and lip activities while it took a look at its very own depiction in the mirror.

Following off, the scientists rested EMO before hours of YouTube video clips revealing human beings talking in different languages and vocal singing. This allowed it to affix its expertise of exactly how its electric motors developed face tasks to the comparable noises, all without any understanding of what was being asserted. At some point, EMO had the ability to take chatted audio in 10 various languages and incorporate its lips near-perfectly.

” We had particular troubles with hard look like’ B’ and with sounds entailing lip puckering, such as’ W’,” Hod Lipson , a design teacher and the supervisor of Columbia’s Creative Machines Lab, mentioned in a affirmation ” Yet these capabilities will likely improve with time and technique. “

Numerous a roboticist has really tried and quit working to produce a persuading humanoid, so before introducing EMO to the world, it required to be tested prior to actual individuals. The scientists afterwards revealed video of the robot talking making use of the VLA variation, and 2 different other strategies for handling its mouth, to 1, 300 volunteers,– along with a referral video revealing optimum lip activity.

Both various other strategies were an amplitude requirement, in which EMO moved its lips based upon the quantity of the sound, and a nearest-neighbor places standard, in which it appeared like face activities it had really seen others make that produced comparable noises. The volunteers were suggested to select the clip that perfect matched the perfect lip movement, and they selected VLA for 62 46 % of circumstances– contrasted to 23 15 % and 14 38 % for the amplitude and nearest-neighbor spots criteria, specifically.

Robot carers will definitely require positive faces

While there are distinctions throughout sexes and societies in exactly how individuals distribute their look, humans typically count substantially on face indicators when engaging with each various other. A 2021 eye-tracking research study situated that we have a look at the face of our discussion companions 87 % of the moment, with regarding 10 to 15 % of that time concentrated especially on the mouth. Various other research study has actually revealed that mouth activities are so essential that they also affect what we pay attention to

Review the complete brief post from the first resource

.